How to Drive Real Improvements with Supplier Scorecards

How to build supplier scorecards that actually drive supplier performance instead of just reporting it.

Most companies have supplier scorecards.

Far fewer have supplier scorecards that actually matter.

That is the real problem.

A scorecard should help you manage supplier performance. It should help you see issues earlier, have better conversations, and make better decisions. But in a lot of organizations, scorecards end up as a reporting exercise. They get updated once in a while, discussed briefly, and filed away without changing much.

That is why so many scorecards feel useless. The problem is usually not the idea of scorecards. It is the way they are used.

A supplier scorecard is not useful just because it exists

Plenty of teams can point to a spreadsheet with supplier metrics.

That does not mean they have a working supplier performance process.

A scorecard becomes useful when it changes behavior. It should influence how your team prioritizes suppliers, how you run review meetings, how suppliers understand expectations, and what happens when performance drops.

If none of that happens, then the scorecard is mostly decoration.

This is why two companies can both say they “have supplier scorecards” while getting completely different outcomes. One is using scorecards to manage supplier performance. The other is just producing them.

Why supplier scorecards fail

Most scorecards fail for predictable reasons.

1. They track too many metrics

This is one of the most common mistakes.

Teams try to make the scorecard comprehensive, so they add everything. Quality incidents. PPM. On-time delivery. Cost changes. Audit findings. Lead times. Response times. Documentation issues. Capacity concerns. Meeting attendance. Escalations.

Very quickly, the scorecard becomes crowded.

When everything is measured, nothing stands out. Teams lose the signal. Suppliers do too.

A useful scorecard is not the one with the most metrics. It is the one that makes it obvious where attention is needed.

2. They are updated too infrequently

A scorecard updated once a quarter is usually too late to drive day-to-day or month-to-month improvement.

By the time the data is reviewed, the team has often already moved on to the next issue. The supplier may not even remember the specific problems behind the score. The result is a backward-looking conversation with little urgency.

If the scorecard arrives late, it becomes historical reporting rather than active management.

3. They are not shared with suppliers

This one undermines the entire point.

Some teams build scorecards mainly for internal visibility. That may help with internal reporting, but it does very little to improve supplier performance if the supplier never sees the score clearly or only hears about it in fragments.

Suppliers cannot respond to a system they do not understand.

If you want scorecards to drive improvement, they need to be part of a shared conversation.

4. They are disconnected from action

This is the biggest reason scorecards become meaningless.

A supplier gets a weak score. Everyone notices it. Then nothing really happens.

No review. No development plan. No corrective action. No follow-up timeline. No ownership.

After a while, the scorecard becomes background noise. Low scores stop feeling urgent because everyone has learned that the score itself does not trigger anything.

That is the moment a scorecard stops being a management tool.

What actually makes a supplier scorecard useful

Useful scorecards are usually much simpler than bad ones.

They do four things well.

They focus on a few critical metrics

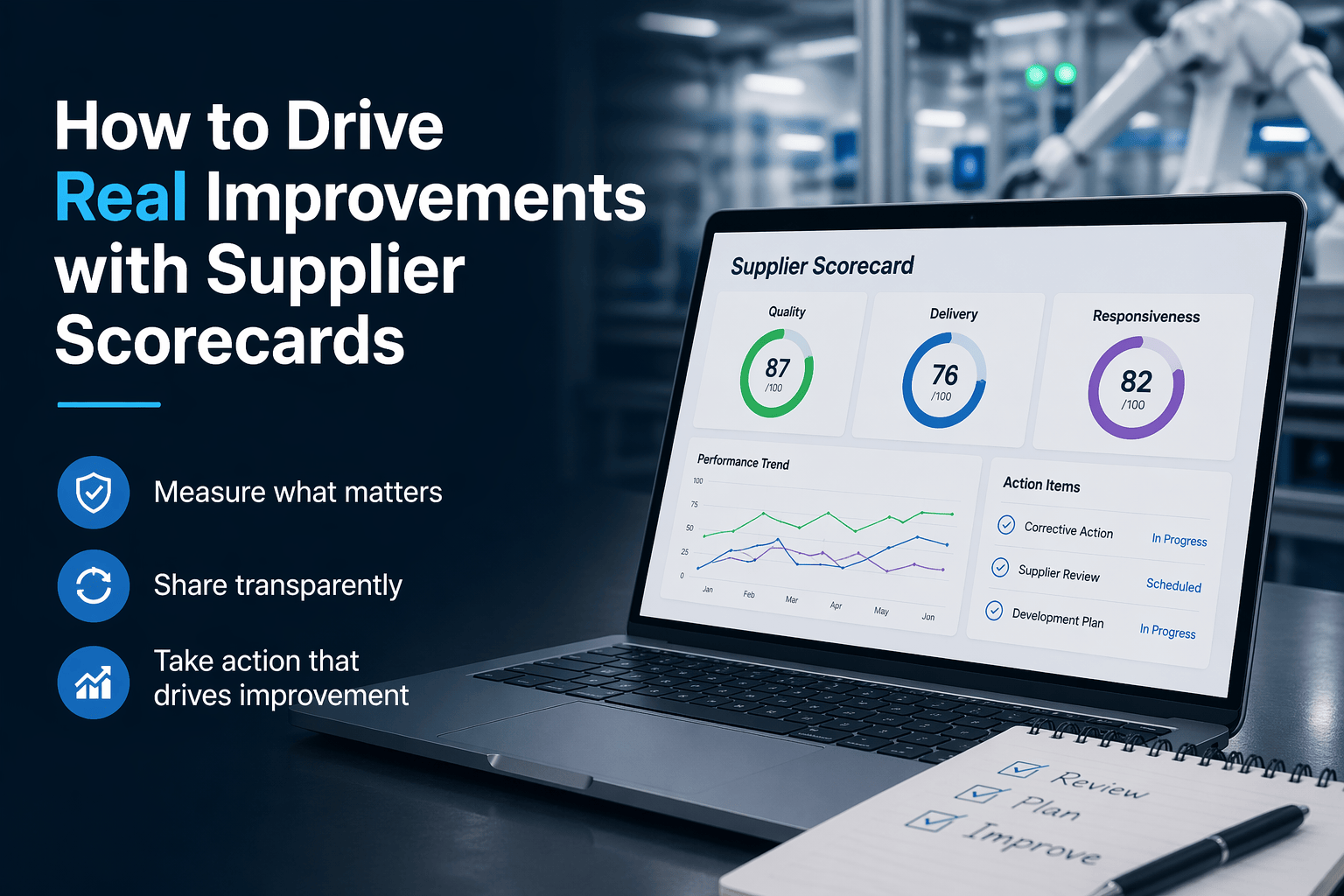

For most supplier quality and operational contexts, three categories are enough to create a strong signal:

- Quality

- Delivery

- Responsiveness

That is not because other metrics never matter. It is because most teams get better results when they focus on the measures that clearly connect to supplier performance and internal pain.

Quality tells you whether the supplier is meeting requirements.

Delivery tells you whether they are reliable.

Responsiveness tells you whether they are acting like a real partner when problems appear.

That is already enough to support meaningful conversations and decisions.

They are updated regularly

A scorecard should show what is happening now, not just what happened a long time ago.

For many teams, monthly is a much better rhythm than quarterly. It is frequent enough to catch patterns early, but not so frequent that the process becomes noisy or overly administrative.

The exact cadence matters less than consistency.

What matters is that the information stays relevant enough to influence action.

They are shared transparently

A useful scorecard is not something you hide from suppliers and then reference vaguely in meetings.

It is something you share directly.

Transparency improves the quality of the conversation. It reduces ambiguity. It helps suppliers understand where they are underperforming and where they are improving. It also makes performance discussions feel less subjective because the expectations are clearer.

That does not mean the scorecard needs to be overly complex or overly formal. It just needs to be visible and understandable.

They trigger follow-up actions

This is the part that separates useful scorecards from passive reporting.

A weak score should lead somewhere.

Maybe it triggers a supplier review. Maybe it leads to a corrective action. Maybe it starts a development plan. Maybe it changes the supplier’s escalation level. Maybe it increases management attention for the next 60 days.

The exact action depends on your process.

But there should be a process.

A scorecard without follow-through teaches suppliers that the numbers do not matter. A scorecard tied to action teaches the opposite.

The goal is not measurement. The goal is behavior change.

This is the key idea most teams miss.

A supplier scorecard is not valuable because it measures performance. It is valuable because it influences behavior.

It should influence how your internal team manages suppliers.

It should influence how suppliers respond to issues.

It should influence where leadership pays attention.

It should influence what happens next.

That is why bloated scorecards often underperform simpler ones. More measurement does not automatically create more control. In many cases it creates more ambiguity.

Clarity is more useful than completeness.

A simple example

Imagine two suppliers with similar recurring delivery problems.

The first supplier gets a quarterly scorecard with 18 KPIs. The delivery issue shows up somewhere in the middle. It is reviewed briefly in a business review. Nothing specific is assigned.

The second supplier gets a monthly scorecard with clear delivery, quality, and responsiveness metrics. The drop in delivery performance is visible immediately. The supplier sees it too. A review is scheduled. A recovery plan is agreed. The next month’s scorecard shows whether things improved.

That second scorecard is doing real work.

It is not just reporting. It is helping drive action.

Where most teams get stuck

A lot of teams know what a better scorecard should look like.

The problem is operationalizing it.

The scorecard sits in one spreadsheet. Corrective actions live in email. Audit findings are somewhere else. Supplier communication is fragmented. Review history depends on who saved what and where.

So even when the scorecard identifies a problem, the next steps are manual and inconsistent.

That is where the process starts to break down.

A better setup connects supplier performance data to the workflows that come after it. If a supplier score drops, the team should be able to move directly into review, follow-up, and corrective action without rebuilding context every time.

That is one of the reasons platforms like Supplios matter. The value is not just seeing the score. The value is connecting that score to the actual supplier quality work that follows, whether that means reviews, audits, action plans, or ongoing supplier communication.

Make your scorecard smaller, clearer, and harder to ignore

If your current supplier scorecard is not driving real improvements, the fix is usually not to add more metrics.

It is usually to make the scorecard more focused, more current, more transparent, and more connected to action.

That is what makes a scorecard useful.

Not the format.

Not the template.

Not the dashboard.

The behavior it drives.

Review your scorecard—what decisions does it actually influence?