Supplier Quality at Scale: How to Fix Inconsistencies Across Global Plants

How to standardize supplier quality processes across global plants without slowing teams down or forcing rigid uniformity.

In global manufacturing organizations, supplier quality often varies more by plant than by policy.

One site handles supplier corrective actions in spreadsheets. Another uses email. A third has a structured workflow in a quality system, but only locally. Audit expectations differ. Onboarding requirements differ. Reporting formats differ.

This is one of the most common reasons supplier quality becomes harder to manage as companies grow.

The issue is not that local teams are doing a bad job. The issue is that every plant ends up building its own version of supplier quality. Over time, that creates confusion for suppliers, weakens internal visibility, and makes it almost impossible to scale best practices across the organization.

Standardization matters. But most companies get it wrong by trying to standardize everything.

That approach usually fails.

The better approach is simpler: standardize the few workflows and data structures that create clarity, then leave room for local flexibility where it actually matters.

The real problem with plant-to-plant inconsistency

Supplier quality fragmentation often hides in plain sight.

If you look at one plant on its own, the process may seem reasonable. The team knows its suppliers. People know how to get things done. Local workarounds feel efficient because they were built around real operational needs.

The problem shows up when you zoom out.

Now one supplier is working with three plants in three regions and receiving different requests from the same customer. One site wants a formal SCAR response in a template. Another wants an email explanation. A third wants a corrective action logged in a portal the supplier barely uses.

From the supplier’s perspective, this does not look like flexibility. It looks like disorganization.

Internally, the consequences are just as serious. Corporate quality leaders cannot compare supplier performance consistently because every site defines and tracks issues differently. Strong practices stay local. Reporting turns into manual cleanup. Escalations are inconsistent. Leaders lose trust in the data.

This is where supplier quality stops being a local execution issue and becomes a systems problem.

Why standardization is so hard

Most multi-plant organizations already know they need more consistency. The reason it does not happen is not lack of awareness. It is that standardization in global operations is genuinely hard.

Local autonomy is one reason.

Plants often have good reasons for doing things their own way. Their supplier base may be different. Their product complexity may be different. Regulatory requirements, customer expectations, and production realities may vary by region.

Then there are legacy processes.

Many plants have workflows that grew over years through email, spreadsheets, ERP notes, shared drives, and local quality habits. Even when those processes are inefficient, they are familiar. Replacing them can feel disruptive.

Different systems make the problem worse.

A company may have a global ERP, but supplier quality activity still lives across disconnected tools. One site tracks audits in one place, corrective actions somewhere else, and supplier scorecards nowhere reliable at all. Another site may be further along, but only within its own walls.

And finally, there is the political problem.

Global standardization efforts often fail because they are perceived as central bureaucracy. Local teams hear “global process” and assume someone is about to force a rigid model onto a messy real-world operation.

That fear is understandable. It is also why many standardization programs stall before they deliver value.

Standardize the core, not every detail

This is the mindset shift that matters.

Consistency does not mean every plant has to work in exactly the same way. It means the organization agrees on the few supplier quality processes that must be clear, visible, and comparable across the network.

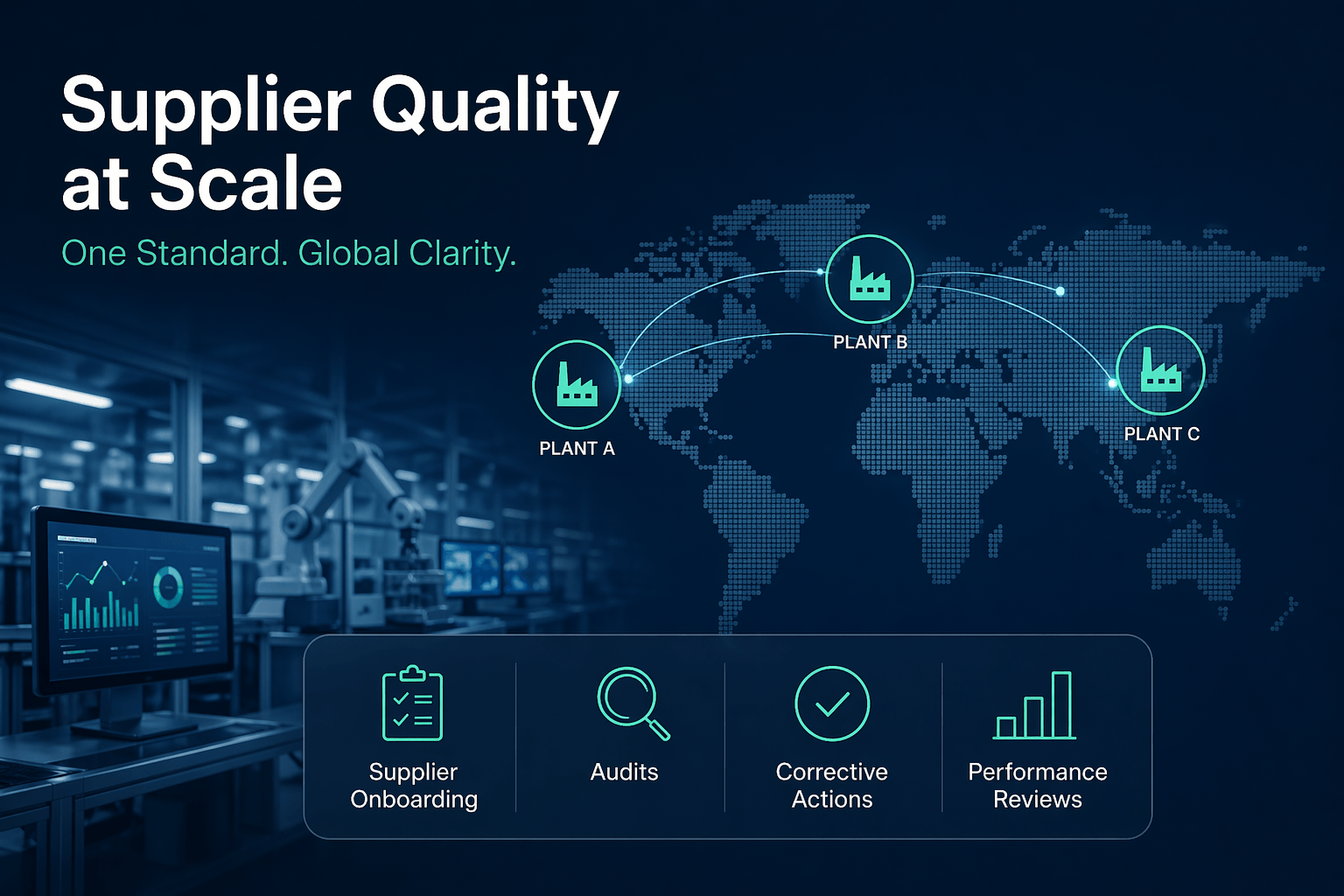

That usually starts with the core workflows:

1. Supplier onboarding

Every plant does not need the exact same local review steps. But the business should align on what data is required, what minimum qualification criteria exist, and how supplier approval status is tracked.

2. Audits

Audit scope may vary by supplier or category, but the structure should not be reinvented by site. Core templates, scoring logic, findings categories, and follow-up expectations should be consistent enough to compare results.

3. SCAR and corrective actions

This is where inconsistency causes immediate pain. If plants use different definitions, owners, deadlines, or closure criteria for corrective actions, suppliers get mixed signals and internal teams lose control. A global standard for issue stages, required fields, responsibilities, and closure rules goes a long way.

4. Supplier performance reviews

If scorecards are defined differently by plant, they are not really scorecards. They are local opinions in dashboard form. Global organizations need a shared baseline for supplier performance data and reporting logic, even if some plants add local metrics on top.

That is the pattern: define the common backbone, not every local nuance.

You do not need total process uniformity. You need a shared operating model for the parts that create supplier clarity and management visibility.

Shared data structure matters as much as shared process

Many standardization efforts focus only on workflow. That is a mistake.

Even if teams loosely follow similar steps, the organization still struggles if the underlying data is inconsistent.

If one plant categorizes supplier issues by defect type, another by business unit, and another by free-text notes, enterprise reporting will always be weak. If audit findings, corrective actions, and supplier performance data are structured differently across sites, leadership cannot trust cross-plant comparisons.

This is why shared data design matters so much.

A practical standardization effort should define things like:

- required fields for supplier issues and corrective actions

- common status stages

- owner roles

- due date logic

- supplier identifiers

- issue categories

- reporting definitions

That may sound operationally boring, but this is what creates enterprise visibility.

Without a shared data structure, “global reporting” usually means exporting local data into spreadsheets and spending hours trying to normalize it after the fact. That is not visibility. That is cleanup.

What good standardization actually looks like

The strongest global supplier quality teams tend to follow a simple model.

They define global minimum standards for core workflows.

They make those workflows visible in a shared system.

They give plants room to handle local differences without breaking the common structure.

That balance matters.

A plant may need different escalation rules for a strategic supplier than another site does. A regional team may need different language or local documentation support. An audit may require site-specific checks depending on the product or process.

That is fine.

The mistake is allowing those differences to break the common process model.

When standardization works, suppliers still experience a coherent customer. Internal teams still produce comparable data. Leadership can still see what is happening across plants without chasing updates manually.

That is the real goal.

Not rigidity. Clarity.

How to start without creating a giant transformation project

This kind of change does not need to begin with a global process overhaul.

In fact, it usually should not.

A better starting point is to map the current differences across plants and identify where inconsistency is actually causing operational friction.

Look at questions like:

- How does each plant manage supplier corrective actions today?

- Where do audits live?

- What data is captured consistently, and what is not?

- How do suppliers receive requests from different sites?

- Which workflows are creating duplicate effort or mixed messages?

- Which metrics cannot be compared reliably across plants?

That exercise usually reveals something important.

Not every variation matters.

Some differences are harmless. Others are expensive.

The priority is to standardize the areas where inconsistency is actively hurting supplier response, internal reporting, cross-site learning, or issue resolution speed.

From there, build a phased rollout.

Start with one or two core workflows. Define the baseline process and data model. Roll it out in a way plants can actually use. Then expand.

This is slower than announcing a global transformation program. But it is much more likely to stick.

Where Supplios fits

This is exactly the kind of problem supplier quality teams run into when they outgrow plant-by-plant tools.

Spreadsheets, inboxes, and local workarounds can function for a while. But they do not create shared visibility across sites, and they do not make supplier quality easier to manage at scale.

Supplios helps teams run supplier quality in a more consistent way across plants by giving them shared workflows, issue tracking, and visibility without forcing every site into the same rigid local operating model.

That matters because most global teams do not need a top-down compliance project. They need a practical way to align the core processes that suppliers and internal teams depend on every day.

That is a much more useful definition of standardization.

Consistency is not rigidity

Too many supplier quality standardization efforts fail because they try to impose too much control.

The real win is not making every plant identical.

It is making the organization clear.

Suppliers should know what to expect. Quality leaders should be able to trust cross-plant reporting. Strong practices should spread instead of staying trapped inside one site.

That is what good standardization creates.

If you are trying to improve supplier quality across global plants, start by mapping where your processes differ today and ask a simple question:

Which of these differences are actually helping local execution, and which ones are just creating noise?

That is usually where the real work begins.